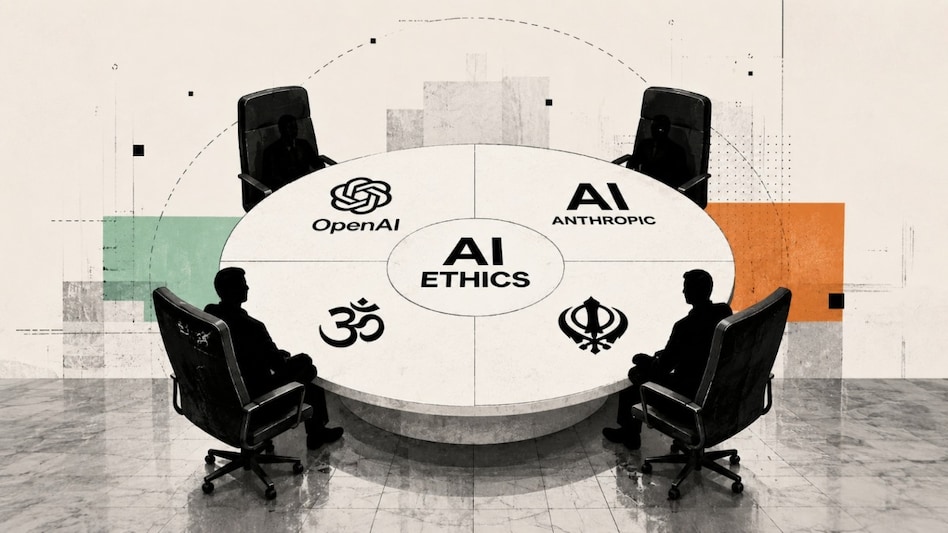

OpenAI, Anthropic turn to Hindu, Sikh, Christian leaders to teach AI morality

AI firms are consulting religious and ethics groups as concerns grow over how future AI systems will make moral decisions in an AGI-driven world.

- May 11, 2026,

- Updated May 11, 2026 1:23 PM IST

Artificial intelligence (AI) systems today can write code, generate images, solve complex calculations and even automate workflows. But one thing AI still struggles with is understanding morality.

Now, some of the world’s leading AI companies, including OpenAI and Anthropic, are turning to religious leaders to help shape the ethical values of future AI systems.

Must read: AI that can replace entire research teams: Anthropic warns of disruptive impact on jobs

According to a report by the Associated Press, executives from OpenAI and Anthropic met leaders from multiple faiths in New York last week during the inaugural “Faith-AI Covenant” roundtable. The discussions focused on how religious and moral principles could influence the development of advanced AI systems.

This comes at a time when concerns are rising globally about the future behaviour of increasingly powerful AI models.

The meeting reportedly included representatives from the Hindu Temple Society of North America, the Baha’i International Community, the Sikh Coalition, the Greek Orthodox Archdiocese of America and The Church of Jesus Christ of Latter-day Saints.

The event was organised by the Geneva-based Interfaith Alliance for Safer Communities, an organisation that works on issues including extremism, radicalisation and human trafficking. According to the AP report, similar discussions may also be held in cities such as Beijing, Nairobi and Abu Dhabi.

Must read: OpenAI employees cash out billions as AI boom creates new tech millionaires

Baroness Joanna Shields, a former executive at Google and Facebook who was involved in the initiative, told AP that AI developers recognise the significance of the technology they are building.

“This direct connection is so important because the people who are building this understand the power and capabilities of what they’re building and they want to do it right — most of them,” Shields said.

She added that the long-term goal of the initiative is to help establish a set of ethical norms and principles for AI systems, drawing from multiple faiths and communities including Christians, Sikhs and Buddhists.

The effort, however, also highlights a core challenge in AI ethics: different religions and cultures often have differing moral frameworks, making it difficult to define universal principles for AI systems.

Must read: OpenAI expands AI safety measures with ‘Trusted Contact’ feature

The discussions also reflect a broader trend within the AI industry. Companies such as Anthropic and Google DeepMind have increasingly hired philosophers and ethics researchers to work on aligning AI systems with human values.

Earlier this year, The Washington Post reported that Anthropic hosted around 15 Christian leaders in San Francisco to discuss the moral and spiritual direction of its AI chatbot Claude.

Anthropic has publicly emphasised the importance of values-driven AI development. In its published “Claude Constitution”, the company states: “We want Claude to do what a deeply and skillfully ethical person would do in Claude’s position.”

The company has previously said that its AI principles were developed with inputs from religious and ethics experts.

For Unparalleled coverage of India's Businesses and Economy – Subscribe to Business Today Magazine

Artificial intelligence (AI) systems today can write code, generate images, solve complex calculations and even automate workflows. But one thing AI still struggles with is understanding morality.

Now, some of the world’s leading AI companies, including OpenAI and Anthropic, are turning to religious leaders to help shape the ethical values of future AI systems.

Must read: AI that can replace entire research teams: Anthropic warns of disruptive impact on jobs

According to a report by the Associated Press, executives from OpenAI and Anthropic met leaders from multiple faiths in New York last week during the inaugural “Faith-AI Covenant” roundtable. The discussions focused on how religious and moral principles could influence the development of advanced AI systems.

This comes at a time when concerns are rising globally about the future behaviour of increasingly powerful AI models.

The meeting reportedly included representatives from the Hindu Temple Society of North America, the Baha’i International Community, the Sikh Coalition, the Greek Orthodox Archdiocese of America and The Church of Jesus Christ of Latter-day Saints.

The event was organised by the Geneva-based Interfaith Alliance for Safer Communities, an organisation that works on issues including extremism, radicalisation and human trafficking. According to the AP report, similar discussions may also be held in cities such as Beijing, Nairobi and Abu Dhabi.

Must read: OpenAI employees cash out billions as AI boom creates new tech millionaires

Baroness Joanna Shields, a former executive at Google and Facebook who was involved in the initiative, told AP that AI developers recognise the significance of the technology they are building.

“This direct connection is so important because the people who are building this understand the power and capabilities of what they’re building and they want to do it right — most of them,” Shields said.

She added that the long-term goal of the initiative is to help establish a set of ethical norms and principles for AI systems, drawing from multiple faiths and communities including Christians, Sikhs and Buddhists.

The effort, however, also highlights a core challenge in AI ethics: different religions and cultures often have differing moral frameworks, making it difficult to define universal principles for AI systems.

Must read: OpenAI expands AI safety measures with ‘Trusted Contact’ feature

The discussions also reflect a broader trend within the AI industry. Companies such as Anthropic and Google DeepMind have increasingly hired philosophers and ethics researchers to work on aligning AI systems with human values.

Earlier this year, The Washington Post reported that Anthropic hosted around 15 Christian leaders in San Francisco to discuss the moral and spiritual direction of its AI chatbot Claude.

Anthropic has publicly emphasised the importance of values-driven AI development. In its published “Claude Constitution”, the company states: “We want Claude to do what a deeply and skillfully ethical person would do in Claude’s position.”

The company has previously said that its AI principles were developed with inputs from religious and ethics experts.

For Unparalleled coverage of India's Businesses and Economy – Subscribe to Business Today Magazine