On October 11, a man in Turkey committed suicide by shooting himself while streaming it live on Facebook through his smartphone.

Even after the phone fell down, disturbing noises could be heard.

What's worse, Facebook failed to censor or take down this video for almost two days while it was continuously being shared and went viral.

In April, an 18-year-old student from US posted a video on Instagram, before taking his life, saying 'Hey, so, I'm killing myself. Goodbye'.

This 12-second clip was seen by over 15,000 people before it was taken down two days later.

ALSO READ: Maggi makes a comeback, wins 57% market share on sustained recovery

Social media companies are struggling to handle these cases and are looking at ways to prevent such horror from playing out on their platform.

Facebook introduced suicide prevention tools earlier this year, and formed a team to look into worrisome posts of users.

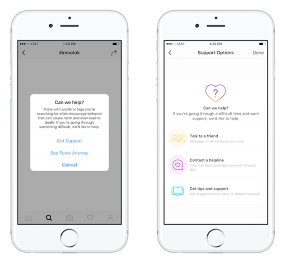

Now, Instagram, which is owned by Facebook, has also introduced similar tools to prevent users from self-harm.

HOW IT WORKS

This move comes after Instagram took steps to limit the abusive content on its app. In September, the photo and video sharing app made it possible for anyone to filter their comments using customizable block lists. Users could bar anyone from posting explicit words or any form of bullying content in their comments sections.

For Unparalleled coverage of India's Businesses and Economy – Subscribe to Business Today Magazine